The performance of Large Language Models (LLMs) is highly determined by their training data. Despite the proliferation of open-weight LLMs, both the access to LLM training data has remained limited and the scale of the data makes it all but inscrutable. Accessing and understanding this data is therefore essential for LLM’s reliability and security. This talk presents two complementary directions: scalable search and indexing of training datasets, and training data attribution—methods that estimate which sources may have influenced a model’s answer. We will discuss what these methods can and cannot reveal about the origins of model-generated information and show recent results from our work on indexing of Apertus training data.

Talk

Safety of Large Language Models: training data attribution and efficient search

March 19, 16:00 (CLOUD)

Speaker

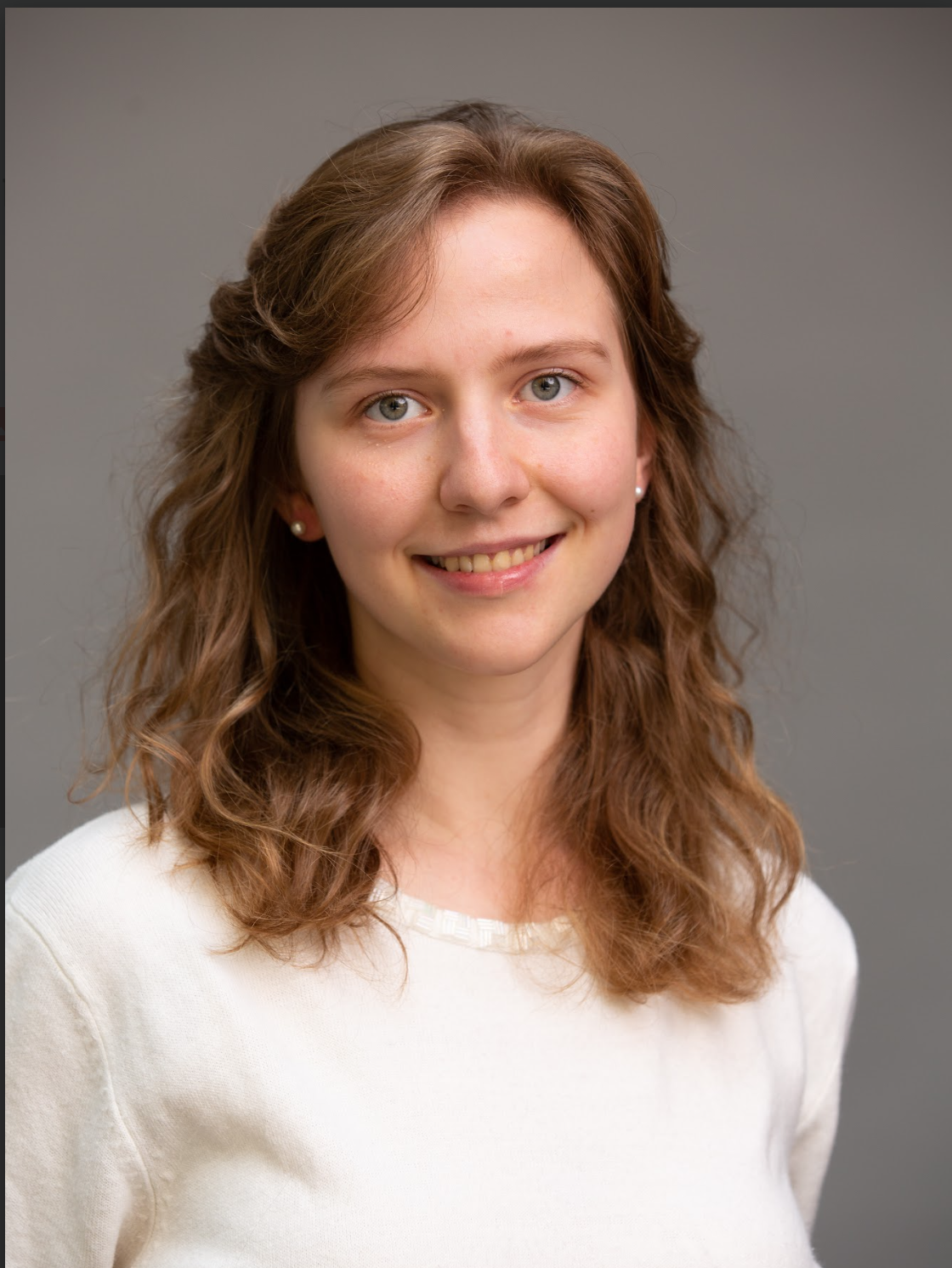

Anastasiia Kucherenko

Anastasiia Kucherenko is a postdoctoral researcher working on AI safety and security at HES-SO Valais-Wallis. She holds a PhD in Computer Science from EPFL, where her research focused on the security and privacy of distributed systems. She also completed cryptography internships at Microsoft Research (Redmond, USA) and the Institute of Science and Technology Austria.

Currently, her focus is LLM safety, training data attribution, and adversarial robustness in generative AI systems. She is part of the safety team for Apertus, Switzerland's open-source large language model, and works in close collaboration with the Swiss Cyber Defense Campus.